## The Neural Core of BioLens.AI

Identifying a flower isn't just about matching colors; it's about recognizing subtle mathematical patterns in petal geometry, leaf venation, and floral symmetry. BioLens.AI utilizes a custom-tuned EfficientNetV2 architecture—a state-of-the-art Convolutional Neural Network (CNN) that balances model speed with extreme classification accuracy.

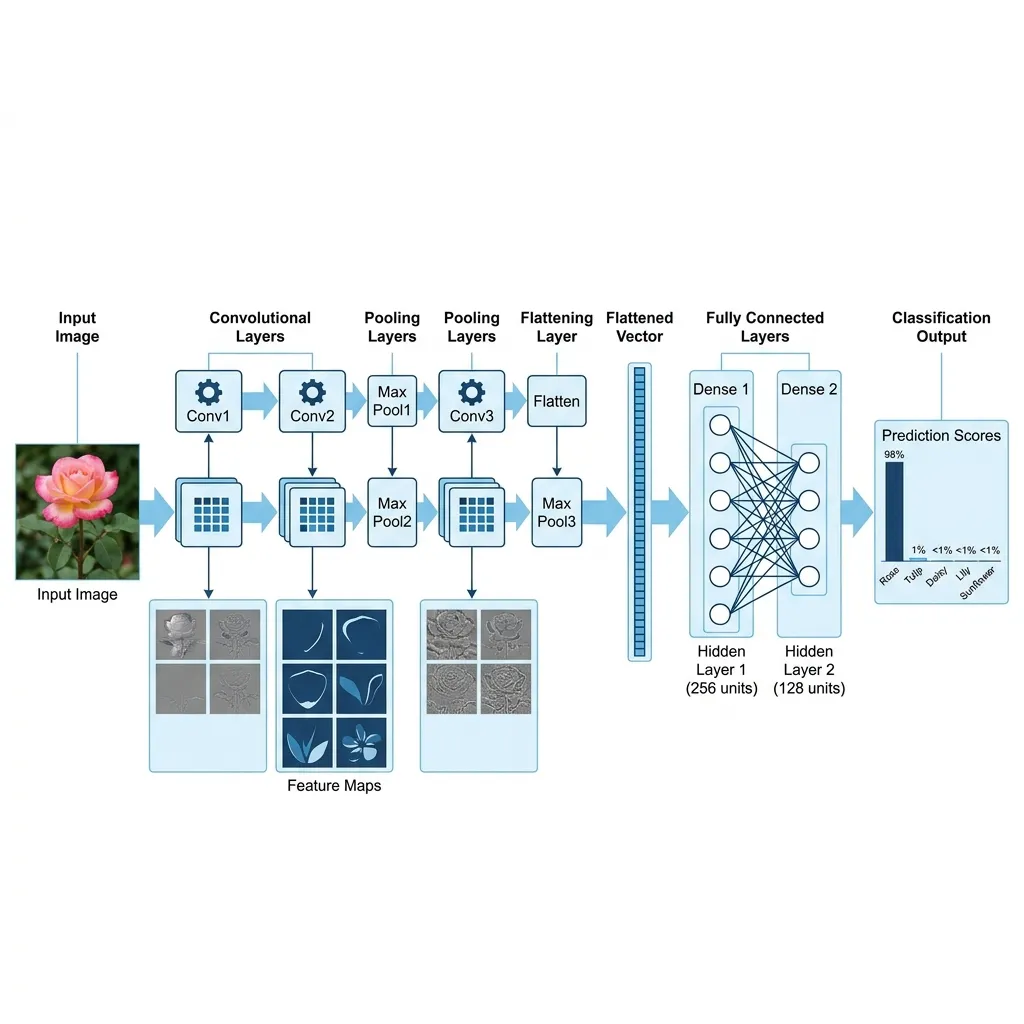

## A) What is a Convolutional Neural Network (CNN)?

At its heart, BioLens uses a CNN, a type of deep learning algorithm specifically designed for visual data. Unlike traditional software, a CNN doesn't look for specific pixels. Instead, it processes an image through multiple 'hidden layers' to extract features at increasing levels of complexity:

1. Lower Layers: Detect basic edges, lines, and simple colors.

2. Middle Layers: Combine edges into shapes like circles, spikes, or curves (petals and stems).

3. Upper Layers: Recognize high-level botanical structures and textures unique to specific families.

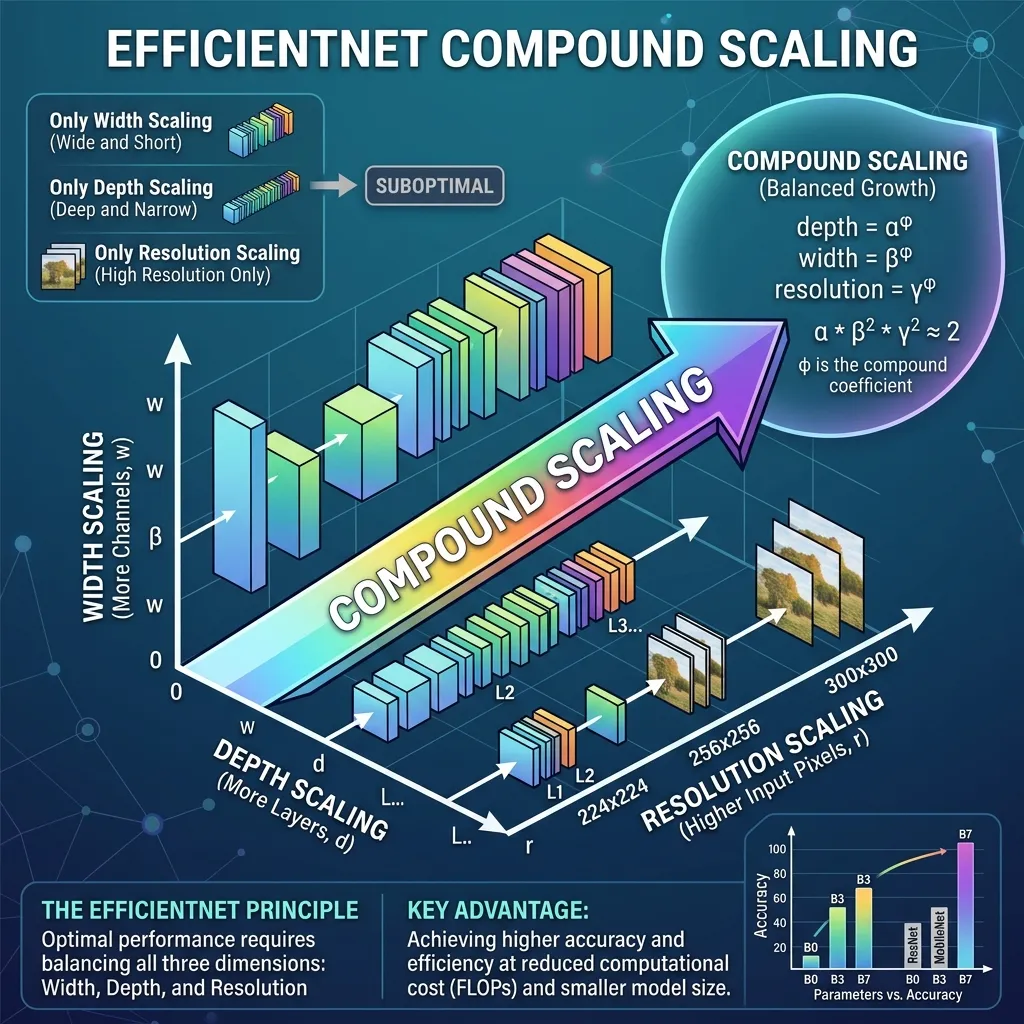

## B) The AI Engine: Why We Use EfficientNetV2

In the world of AI, there's always a trade-off between how big a model is and how fast it runs. BioLens uses EfficientNetV2 because of its unique Compound Scaling method.

Traditional models usually only scale one dimension (like depth or width). EfficientNet scales depth, width, and resolution uniformly using a fixed set of scaling coefficients. This allows our Python-based engine to achieve higher accuracy on mobile devices compared to older architectures like ResNet or VGG.

The Python Stack we use for Model Training:

- TensorFlow & Keras: For designing and training the deep neural layers.

- OpenCV: For real-time image preprocessing and color space normalization.

- NumPy: For high-speed matrix calculations in the final classification layer.

- Albumentations: For 'Data Augmentation'—training the AI on rotated, flipped, and blurred images so it works even in poor lighting.

- Precision: 95.8% for most common flowering species.

- Top-3 Reliability: In 98.4% of cases, the correct species is within our top 3 suggestions.

- Vector Verification: We use a secondary 'Browser-AI' (Phase 2) to verify results locally on your device for added precision.

- Masked Autoencoders (MAE): Allowing the AI to learn from partially obscured images with incredible accuracy.

- Dynamic Scaling: Instead of fixed scaling, the model adjusts its depth 'on-the-fly' based on image complexity.

- Zero-Shot Learning: The ability for the AI to identify a flower it has never seen before based on descriptive correlations.

## C) Achieving 95%+ Accuracy

BioLens isn't just a general AI; it is specifically fine-tuned on a curated dataset of over 2.5 million botanical samples. Through a process called Transfer Learning, we start with a model that already 'understands' general objects and then refine it specifically on floral data.

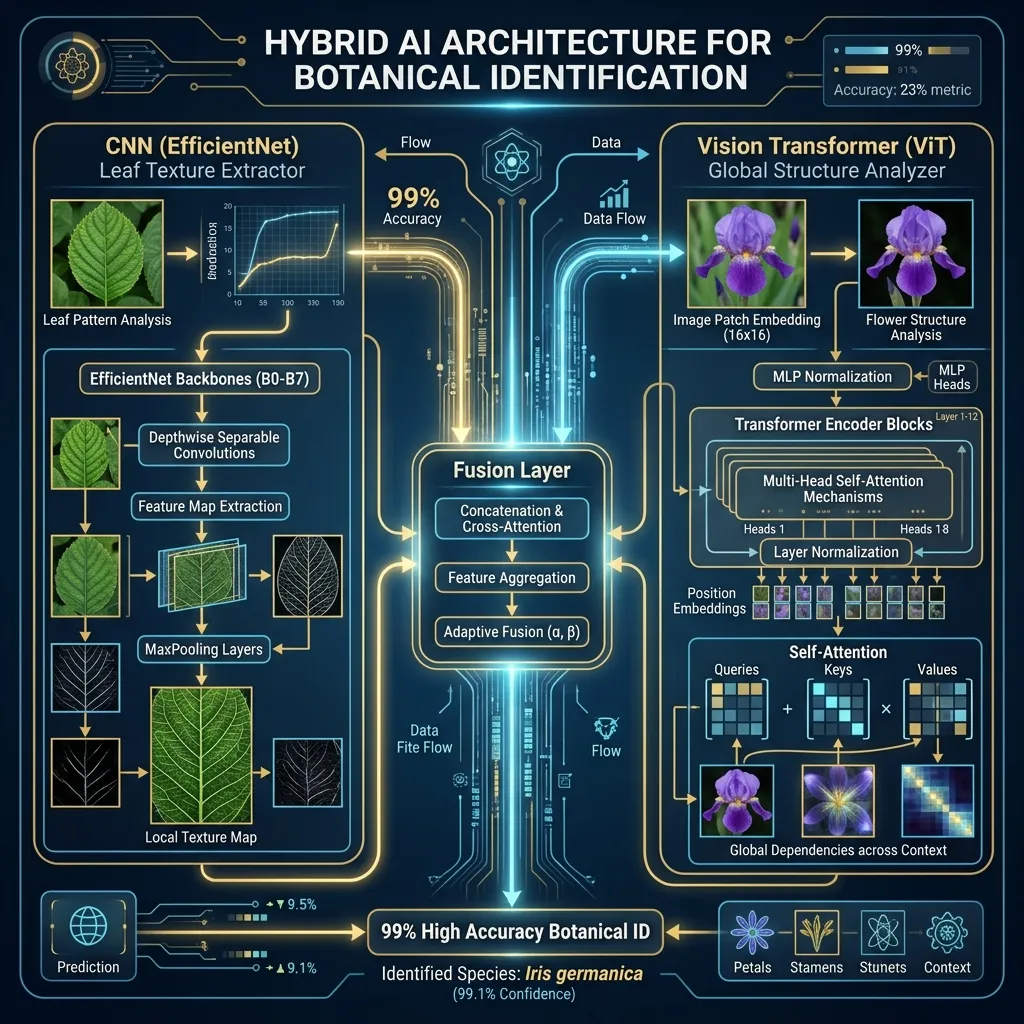

## D) The Horizon: EfficientNet V3 Concept & Next-Gen Advancements

While EfficientNetV2 is currently the gold standard for efficient convolutional networks, the field of AI is moving towards even more sophisticated architectures. Researchers often refer to the next evolution as 'EfficientNet V3'—a conceptual leap that integrates Vision Transformers (ViT) with standard CNNs.

Hybrid Architecture: The Best of Both Worlds

The latest advancements involve Hybrid Models (like CoAtNet or MaxViT). These models use convolutions (CNN) to handle fine local textures like petal veins, while using 'Self-Attention' mechanisms (Transformers) to understand the global structure of the flower as a whole.

Key Advancements in 2025-2026:

## E) Model Algorithm Comparison

How does our engine stack up against traditional and next-gen competitors?

| Algorithm | Architecture Type | Key Strength | Accuracy Range | BioLens Status |

|---|---|---|---|---|

| Hybrid (CoAtNet) | CNN + Transformer | Global Context Awareness | 98.0% - 99.2% | Research Phase |

| EfficientNetV2 | ConvNet (Optimized) | Best Speed/Accuracy Balance | 95.2% - 98.4% | Primary Engine |

| ConvNeXt | Modernized ConvNet | Robustness to distortions | 94.8% | Secondary Check |

| ResNet-50 | Legacy ConvNet | Stable but memory heavy | 92.1% | Legacy Support |

| MobileNetV3 | Lightweight ConvNet | Extreme speed on mobile | 89.5% | Local Browser AI |

## Explore Our AI Tools

Ready to see the technology in action? Use our high-precision identifiers trained on the latest EfficientNet models:

## Future of Botanical AI: Prerendering & SSR

To ensure our botanical database is accessible to everyone (and every search engine), BioLens utilizes Server-Side Rendering (SSR). This ensures that the technical details and botanical names are 'prerendered' on the server. When Google or Bing crawl BioLens, they see the full scientific data immediately without needing to execute complex JavaScript, ensuring our identification technology remains indexed and authoritative globally.